Table of Contents

- Introduction

- What is Data Preprocessing?

- Why It Is Important

- Steps in Data Cleaning

- Example

- Tools Used

- Benefits

- Common Mistakes

- Conclusion

Introduction

When beginners start learning Machine Learning or Data Science, they often believe that building and training models is the most important part.

It may look like the core task—but in reality, it is not.

The real and most time-consuming part of any data science project is:

- Data collection

- Data preprocessing

- Exploratory Data Analysis (EDA)

These steps are often ignored because they feel repetitive and less exciting. However, they are the foundation of a successful model.

Many beginners achieve 90%+ accuracy and assume their model is performing well. But in real-world scenarios, this can be misleading due to:

- Noisy data

- Poor preprocessing

- Overfitting

In practical applications, data quality matters more than model accuracy.

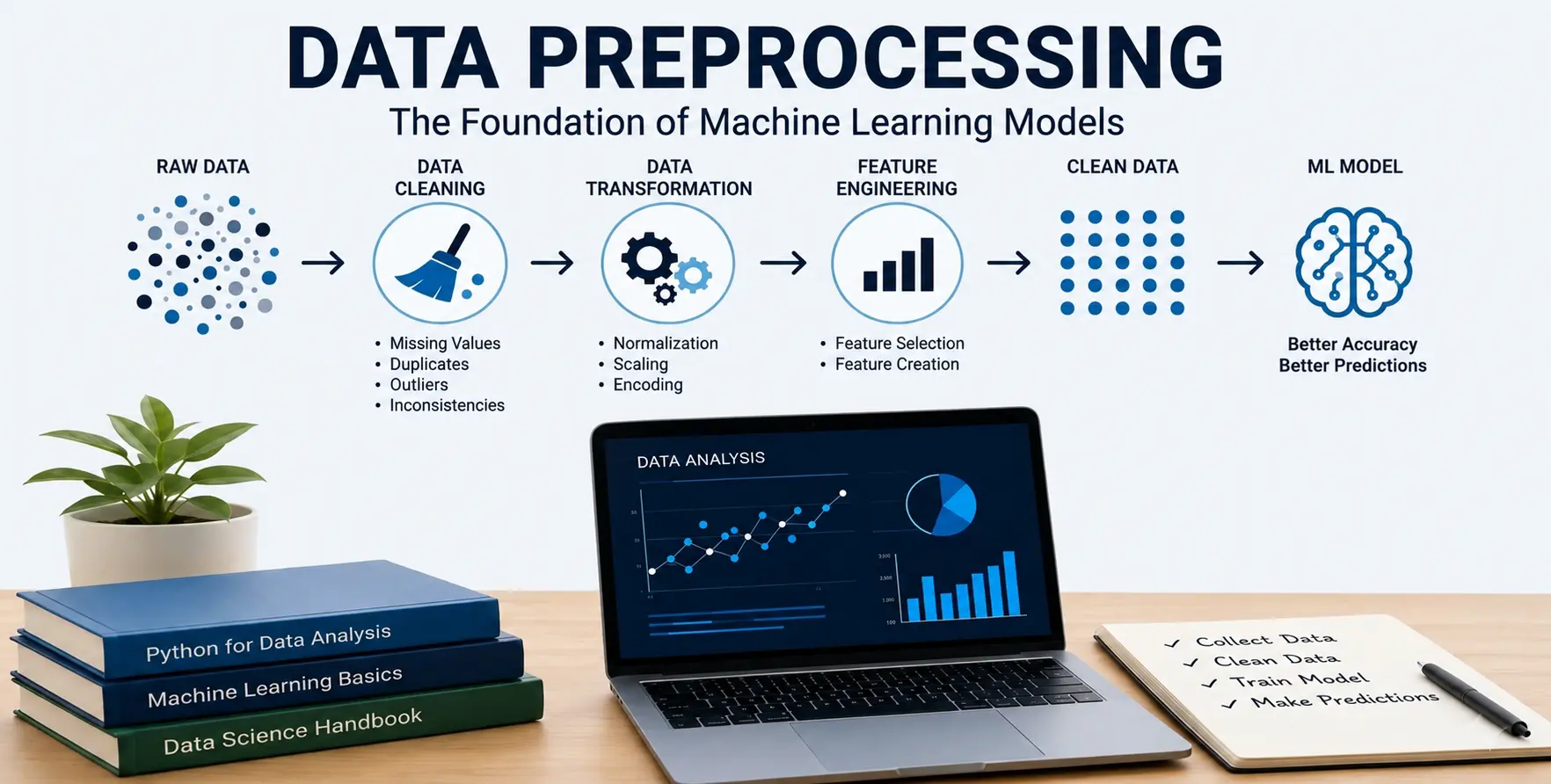

What is Data Preprocessing?

Data preprocessing is the process of transforming raw, unstructured data into a clean and usable format for Machine Learning models.

Real-world data is rarely perfect. It often contains:

- Missing values

- Errors and inconsistencies

- Duplicate records

- Irrelevant information

Preprocessing ensures that the data is structured, clean, and ready for analysis.

In simple terms:

Data preprocessing is the step where raw data becomes meaningful input for a model.

Why It Is Important

Machine Learning models learn patterns from data. If the data is poor, the model will learn incorrect patterns.

Key reasons why preprocessing is important:

- Improves data quality

- Reduces noise and errors

- Enhances model performance

- Prevents overfitting

- Ensures reliable predictions

A simple model trained on clean data often performs better than a complex model trained on poor data.

Steps in Data Cleaning

Data cleaning is a critical part of preprocessing. Below are the common steps:

1. Handling Missing Values

Missing data is very common in real datasets.

Common approaches:

- Remove missing records

- Fill using mean, median, or mode

- Use advanced imputation techniques

Important Insight:

This step requires domain knowledge. For example:

- In financial data, replacing missing values with mean or median can lead to completely wrong conclusions

- In healthcare, deleting records may remove critical information

Blindly applying methods can cause serious issues.

2. Removing Duplicates

- Duplicate records can bias the dataset

- Identify repeated entries

- Remove unnecessary duplicates

3. Handling Outliers

Outliers are extreme values that can distort model performance.

- Detect using statistical methods

- Remove or cap values carefully

Again, domain understanding is important. Some outliers are valid and should not be removed.

4. Fixing Inconsistent Data

Data may have inconsistent formats such as:

- "Male" vs "M"

- Different date formats

Standardizing values improves data quality.

5. Feature Scaling

Some algorithms require scaled data.

- Normalization

- Standardization

6. Encoding Categorical Data

Machine Learning models cannot directly understand text.

Convert categories into numerical values using:

- Label Encoding

- One-Hot Encoding

Example

Imagine you are building a model to predict loan approval.

Raw dataset issues:

- Missing income values

- Duplicate customer entries

- Outliers in salary

- Inconsistent job titles

After preprocessing:

- Missing values are handled carefully using domain logic

- Duplicates are removed

- Outliers are analyzed before removal

- Job titles are standardized

Now the dataset is clean and reliable for training.

Tools Used

- Python libraries like Pandas and NumPy

- Scikit-learn for preprocessing techniques

- Data visualization libraries for EDA

Benefits

- Improved model accuracy

- Better generalization to real-world data

- Reduced errors

- More reliable predictions

- Strong foundation for Machine Learning models

Common Mistakes

Beginners often focus only on model building and ignore data quality.

- Skipping preprocessing steps

- Blindly filling missing values without domain understanding

- Removing outliers without analysis

- Ignoring data leakage

- Trusting high accuracy without validation

Reality Check

A model with 90%+ accuracy is not always a good model.

It may happen due to:

- Overfitting

- Noisy or biased data

- Improper preprocessing

Such models often fail in real-world scenarios.

Conclusion

Data preprocessing is one of the most critical steps in Data Science and Machine Learning.

While model training gets most of the attention, the real impact comes from:

- Clean data

- Proper preprocessing

- Strong domain understanding

Without domain expertise, even correct preprocessing techniques can lead to wrong results.

If your data is good, even a simple model can perform well.

If your data is poor, even advanced models will fail.

In real-world projects, success depends less on the model and more on how well you understand and prepare your data.